Introducing MacNoise!

🍎 MacNoise

It’s been quite a while since my last blog post but I’ve been really busy working on a project I’m thrilled to talk about, MacNoise. MacNoise is a modular macOS telemetry noise generator for EDR testing and security research. It generates real system events: network connections, file writes, process spawns, plist mutations, TCC permission probes, and more so security teams can validate that their EDR, SIEM, and firewall tooling detects what it is supposed to detect.

Repo Link: https://github.com/0xv1n/macnoise

Motivation

Defenders and security practitioners often do not know the full extent of an environment’s visibility into system behavior. This can be due to over-reliance on EDR without validating how much telemetry is recorded and/or logged, or due to logging levels not being sufficient on various appliances. Anyone who has worked in incident response has, at least once, encountered an intrusion or breach where a system was not logging critical telemetry (process creation events, DNS traffic, command line auditing). This project aims to arm defenders with the means of knowing logging gaps from endpoint telemetry before an incident.

This project’s inception began as:

- a way for me to learn a new language (🦫)

- a chance for me to expand beyond the common and saturated Windows tooling

Once I had some basic understanding of system API calls, I realized that this could be repurposed for easy and scalable detection validation and telemetry auditing. This project can also function as a hopeful expansion of the EDR-Telemetry project (hi, Kostas!😏). While there is cross-over between MacOS and Linux-ish systems, there is still a vast wealth of MacOS specific telemetry that often goes unlogged or underappreciated 😭.

Project Architecture

Modules

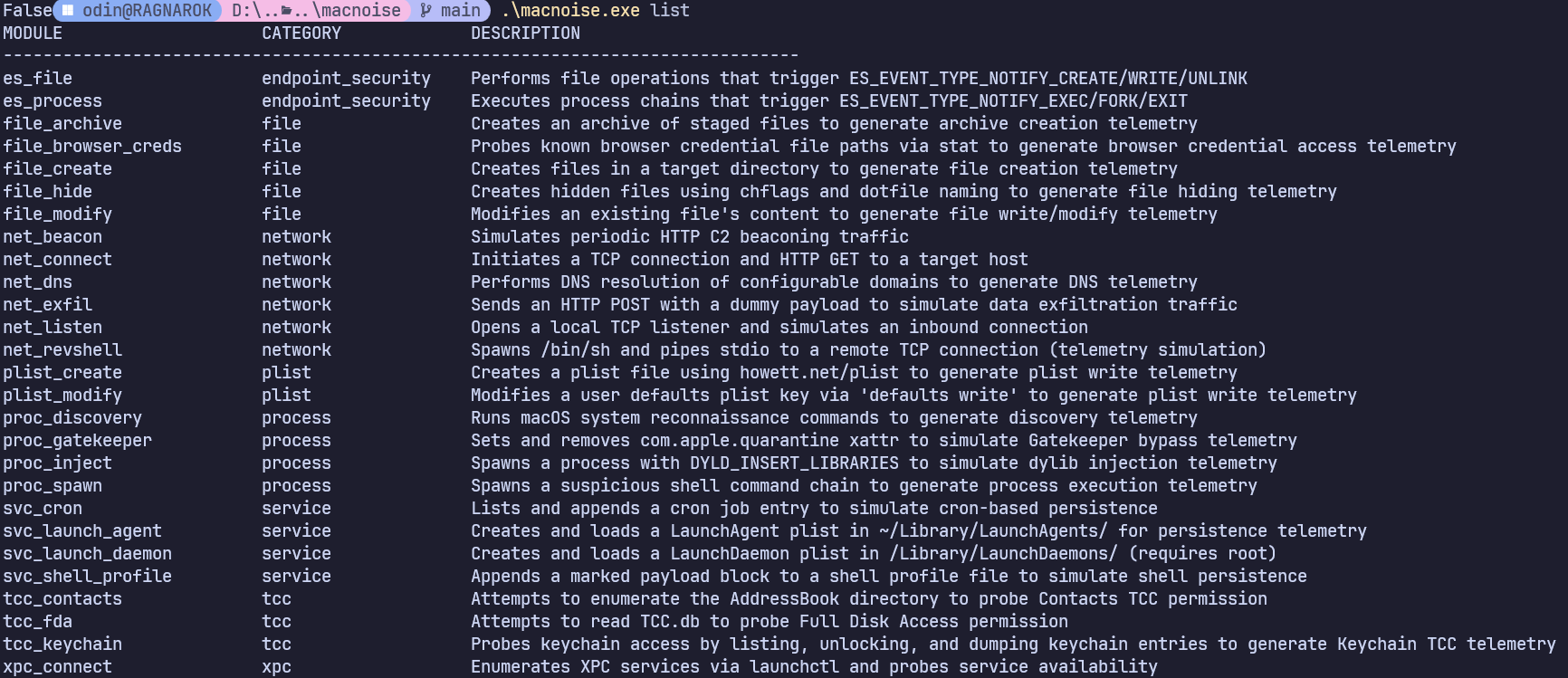

The project is structured to be easily extensible, and is structured in a way that allows for practitioners to customize it for their needs. While this project has a good amount of coverage for various telemetry types, it is not feature complete and I will rely on the community (and my own free time) to continue expanding supported telemetry. To view currently available modules, just run macnoise list:

For detailed breakdowns of these modules, I encourage you to read the… README.

Execution

MacNoise builds a cross-platform binary, that utilizes an intuitive REPL. There are a handful of commands available:

macnoise run <module> [--param key=val ...] Run a specific module

macnoise run --category <cat> Run all modules in a category

macnoise run --all Run all modules

macnoise list [--category <cat>] List modules

macnoise info <module> Show module details, params, MITRE

macnoise scenario <file.yaml> Run a YAML scenario

macnoise categories List categories with counts

There are also additional configuration flags that can be passed in during runtime.

Testing

There is a built-in testing mode (dry run) that allows a user to preview the actions that a module will take. This can be combined with audit logging (outlined below) to preview behaviors, and eye-check the emitted telemetry.

Audit Logging

MacNoise produces two distinct output streams:

- Telemetry events —

TelemetryEventrecords written to stdout (or a file via –output) by every module. These are what your EDR, SIEM, or firewall sees. - Audit records — a separate, structured log of what MacNoise itself did: which modules ran, prereq outcomes, timing, events emitted, cleanup results, and MITRE technique mappings. These should be sent to the SIEM so you can validate job runs, and identify missing logs.

Audit records and telemetry events adhere to the OCSF (Open Cybersecurity Schema Framework) JSONL format (and mapping object definitions). Each record is a valid OCSF class with MacNoise-specific fields placed in the

unmappedobject so the record remains schema-compliant 💪.

Enable audit logging with --audit-log and preview the event without emitting telemetry via --dry-run

Demo

The Cool Stuff

As more modules were created, I began to wonder if I could start to operationalize this with some threat intelligence to emulate malware or APT activity. Enter: SCENARIOS (firework sounds, airhorns).

Scenarios

Scenarios allow users to design a sequence of activity to emulate adversary tradecraft and find gaps in logging coverage in the process. The repo includes a few sample scenarios so you can see how easy they are to write. Scenarios are defined in intuitive YAML e.g.:

name: EDR Validation Suite

description: Modules most relevant to EDR detection validation — covers process, network, file, persistence, and TCC telemetry

steps:

- module: proc_spawn

params:

command: "echo 'Telemetry Payload Executed' && id"

- module: proc_inject

params:

dylib_path: "/tmp/macnoise_inject.dylib"

target: "/usr/bin/true"

- module: proc_signal

params:

target_command: "sleep 5"

- module: net_connect

params:

target: "127.0.0.1"

port: "4444"

- module: net_dns

params:

domains: "example.com,pastebin.com,raw.githubusercontent.com"

- module: file_create

params:

base_dir: "/tmp/macnoise_edr"

count: "5"

prefix: "edr_test_"

- module: svc_launch_agent

params:

label: "com.macnoise.edrtest"

program: "/usr/bin/true"

- module: tcc_fda

- module: tcc_contacts

Because the audit logging wrapper tags jobs with correlation IDs, it is easy to go into a SIEM and validate each module to identify gaps in your environment. Scenario YAMLs can be programmatically generated with relative ease if you pass a threat intelligence report and the AI Guidance documentation in the repo to the LLM of your choice. My hope is that scenarios are shared, and can assist in those pesky “what is our coverage” RFIs.

Closing

The code repo is located on my github HERE. I really hope this tool offers value to all the defenders, engineers, and researchers out there. I am looking for help managing, maintaining, and contributing to this project.